Conductor runs using your local Claude Code login. You can check your auth status by running

Conductor runs using your local Claude Code login. You can check your auth status by running claude /login in your terminal.

We also support running Claude Code on OpenRouter, AWS Bedrock, Google Vertex AI, Vercel AI Gateway, or any Anthropic API compatible provider, like GLM.

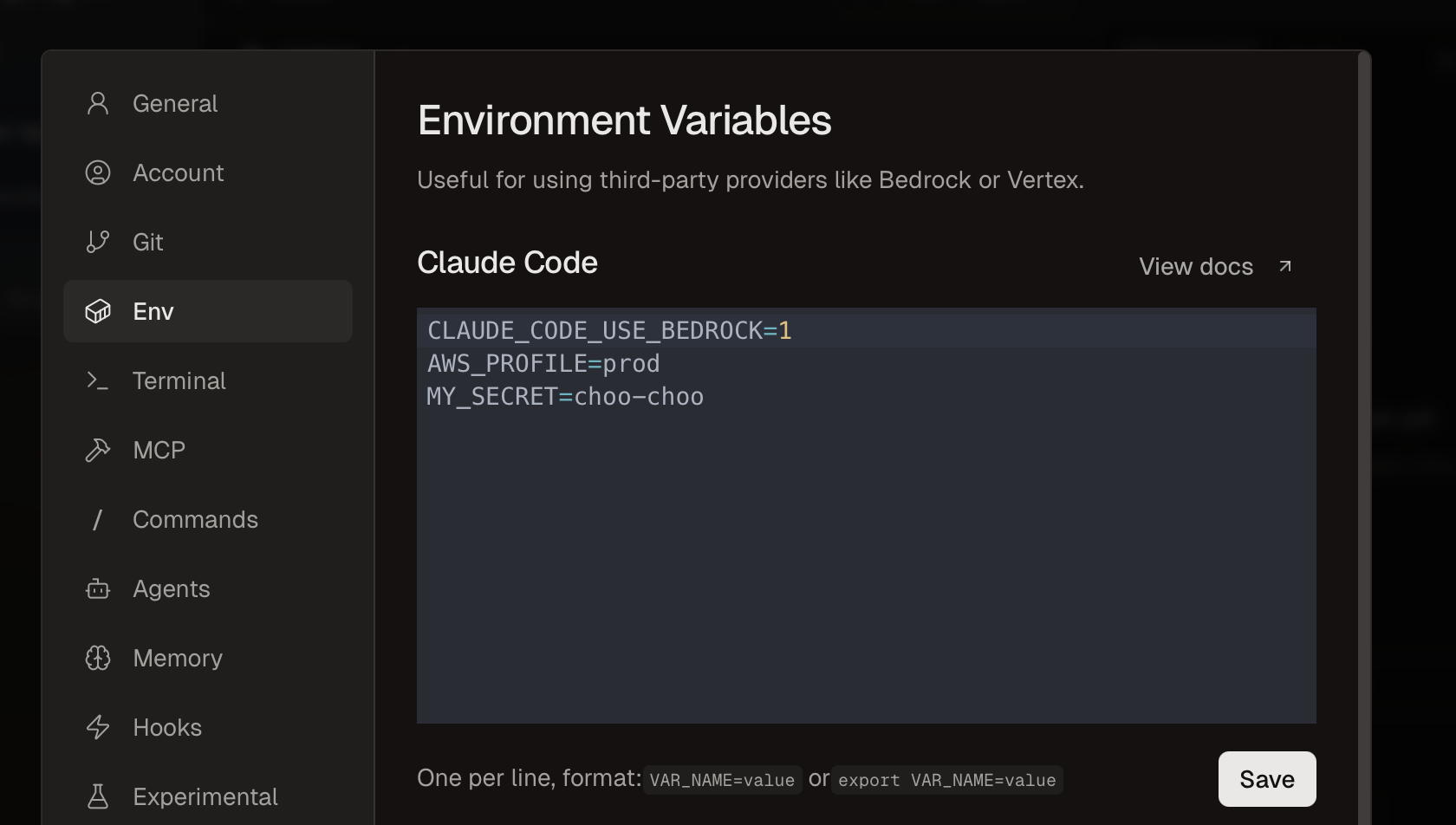

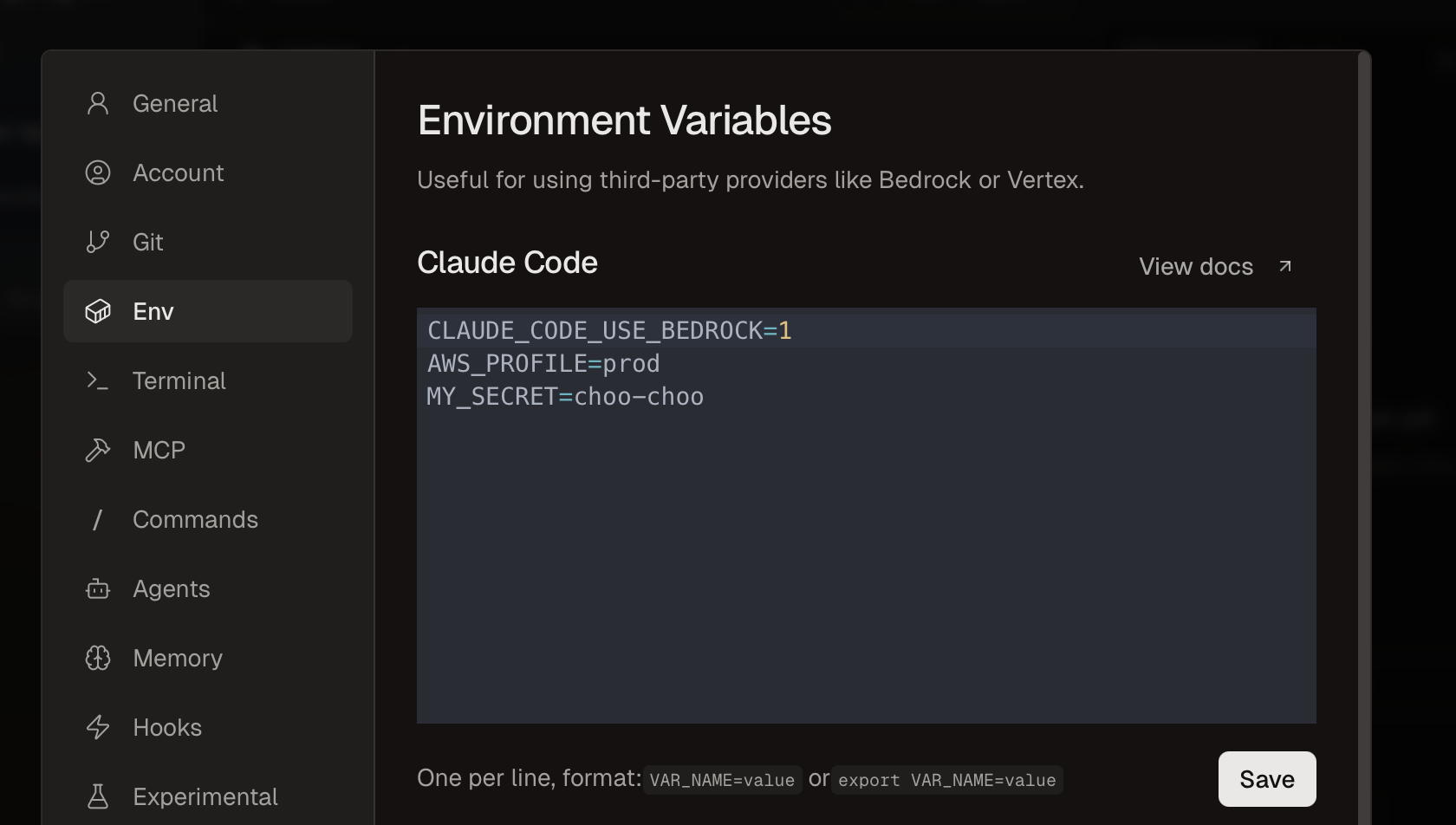

Go to Settings -> Env to set environment variables. Check out the Claude Code docs for a full list of environment variables.

When using Anthropic-compatible providers like OpenRouter, Vercel AI Gateway, or GLM, ANTHROPIC_API_KEY must be explicitly set to an empty string to prevent Claude Code from attempting to authenticate with Anthropic directly.

OpenRouter Example

export ANTHROPIC_BASE_URL="https://openrouter.ai/api"

export ANTHROPIC_AUTH_TOKEN="your-openrouter-api-key"

export ANTHROPIC_API_KEY=""

Vercel AI Gateway Example

export ANTHROPIC_BASE_URL="https://ai-gateway.vercel.sh"

export ANTHROPIC_AUTH_TOKEN="your-vercel-ai-gateway-api-key"

export ANTHROPIC_API_KEY=""

Bedrock Example

export CLAUDE_CODE_USE_BEDROCK=1

export AWS_REGION=us-east-1

export ANTHROPIC_SMALL_FAST_MODEL_AWS_REGION=us-west-2

GLM Example

export ANTHROPIC_BASE_URL="https://api.z.ai/api/anthropic"

export ANTHROPIC_AUTH_TOKEN="your-zai-api-key"

export ANTHROPIC_API_KEY=""

Azure AI Example

# Enable Azure AI Foundry integration

export CLAUDE_CODE_USE_FOUNDRY=1

# Azure resource name (replace {resource} with your resource name)

export ANTHROPIC_FOUNDRY_RESOURCE={resource}

# Or provide the full base URL:

# export ANTHROPIC_FOUNDRY_BASE_URL=https://{resource}.services.ai.azure.com

# Set models to your resource's deployment names

export ANTHROPIC_DEFAULT_SONNET_MODEL='claude-sonnet-4-5'

export ANTHROPIC_DEFAULT_HAIKU_MODEL='claude-haiku-4-5'

export ANTHROPIC_DEFAULT_OPUS_MODEL='claude-opus-4-5'

See more

See the Claude Code docs for a full list of environment variables.